gRPC is a communication protocol for services, built on HTTP/2. Unlike REST over HTTP/1, which is based on resources, gRPC is based on Service Definitions. You specify service definitions in a format called protocol buffers (“proto”), which can be serialized into an small binary format for transmission.

With gRPC, you can generate boilerplate code from .proto files into multiple programming languages, making gRPC an ideal choice for polyglot microservices.

While gRPC supports some networking use cases like TLS and client-side load balancing, adding Istio to a gRPC architecture can be useful for collecting telemetry, adding traffic rules, and setting RPC-level authorization. Istio can also provide a useful management layer if your traffic is a mix of HTTP, TCP, gRPC, and database protocols, because you can use the same Istio APIs for all traffic types.

Istio and its data plane proxy, Envoy, both support gRPC. Let’s see how to manage gRPC traffic with Istio.

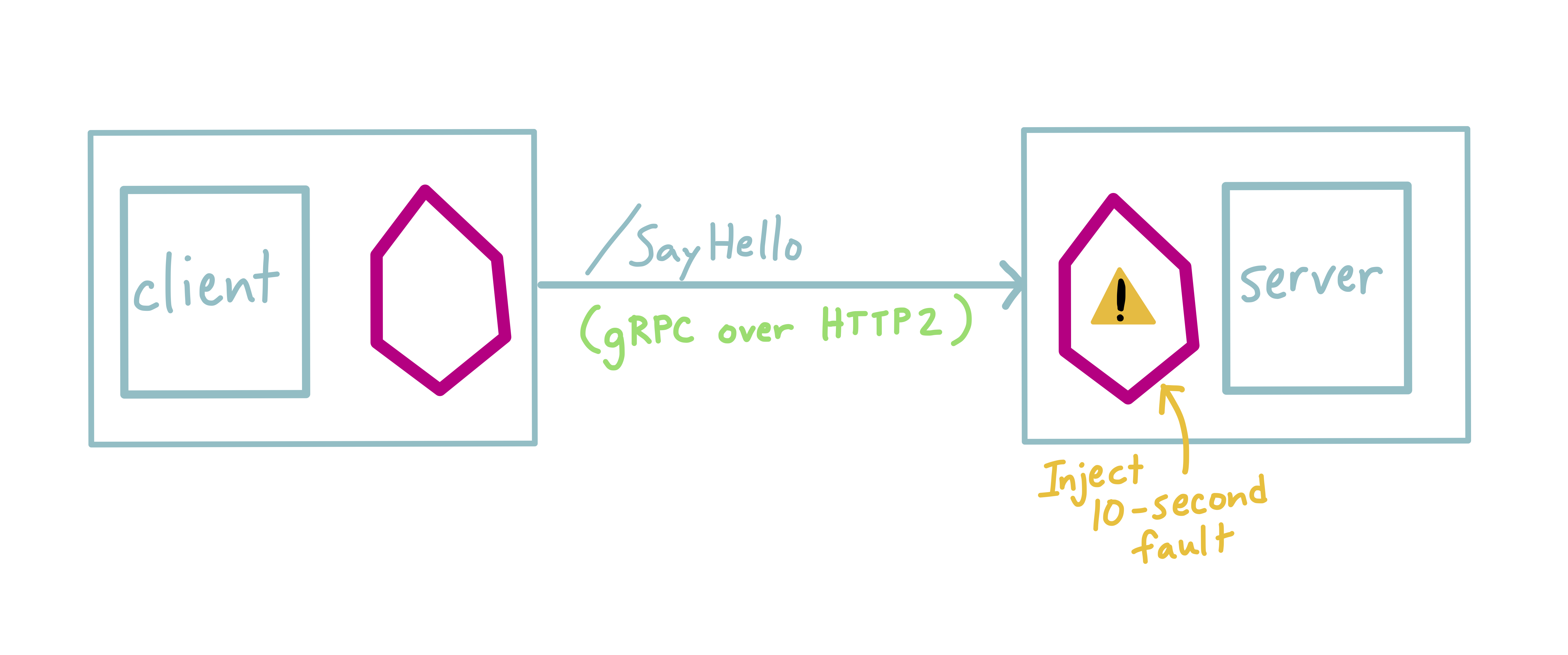

Here, we’re running two gRPC Services, client and server. client makes an RPC call to the server’s /SayHello function every 2 seconds.

Adding Istio to gRPC Kubernetes services has one pre-requisite: labeling your Kubernetes Service ports. The server’s port is labeled as follows:

apiVersion: v1

kind: Service

metadata:

name: server

spec:

selector:

app: server

type: ClusterIP

ports:

- name: grpc # important!

protocol: TCP

port: 8080

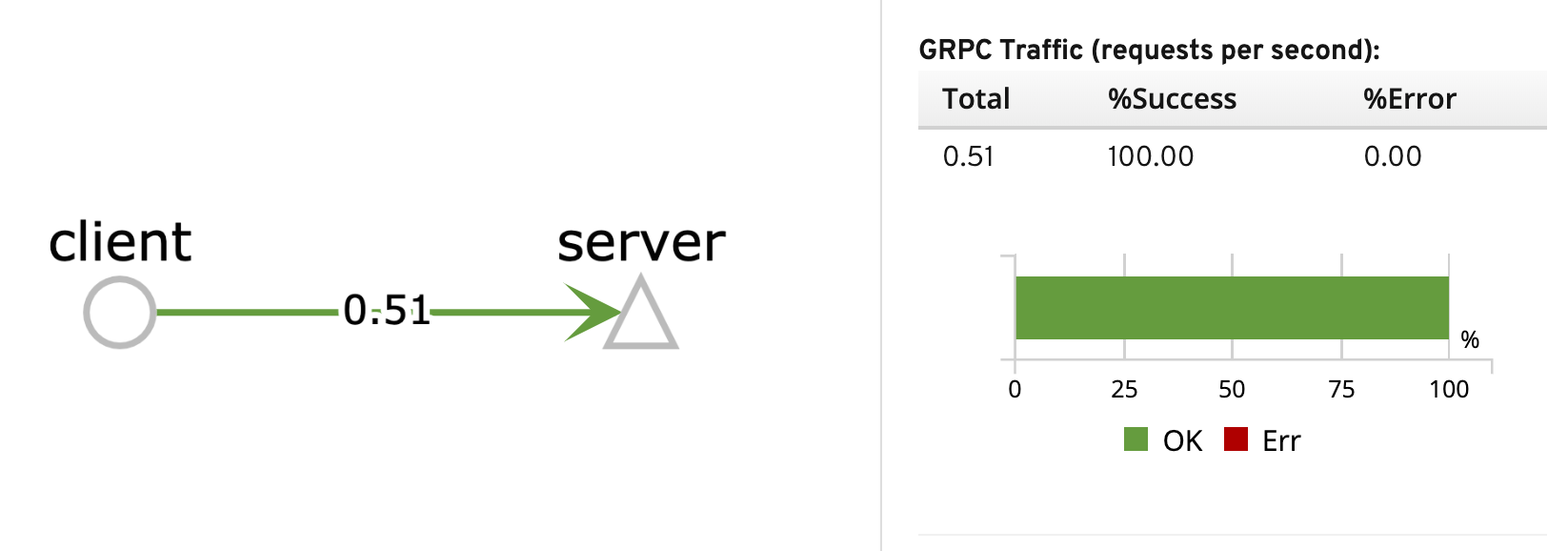

Once we deploy the app, we can see this traffic between client and server in a service graph:

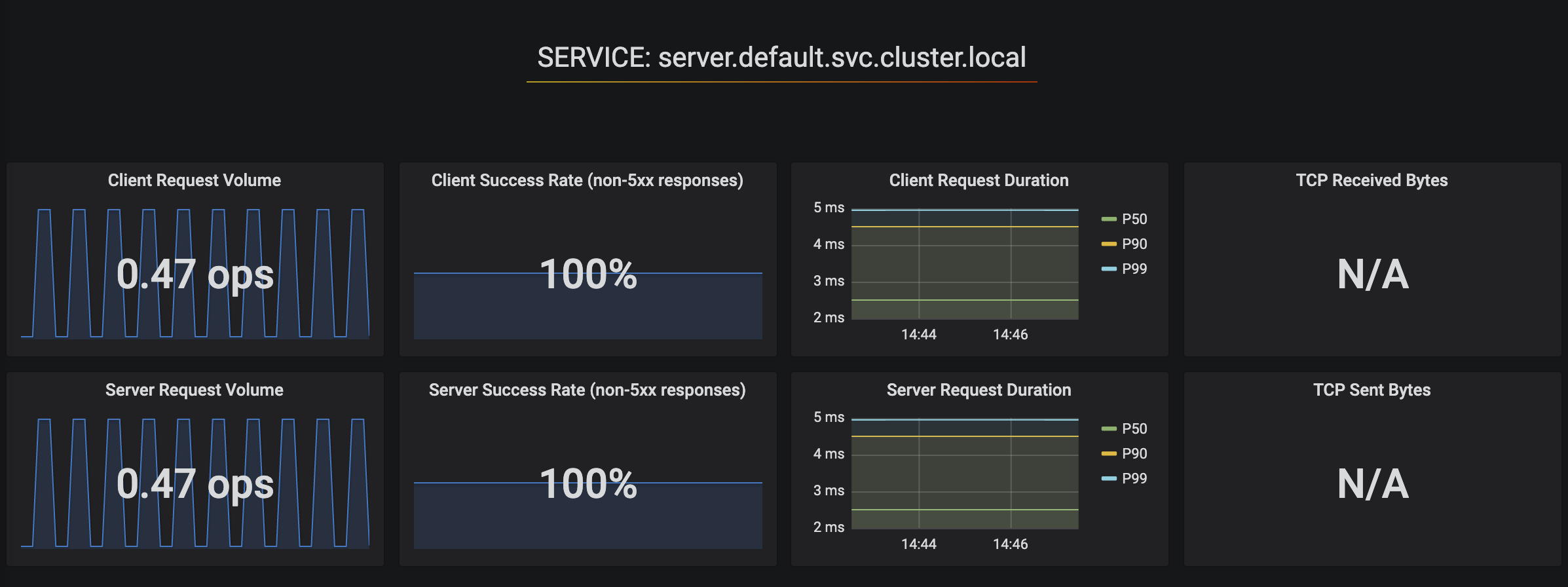

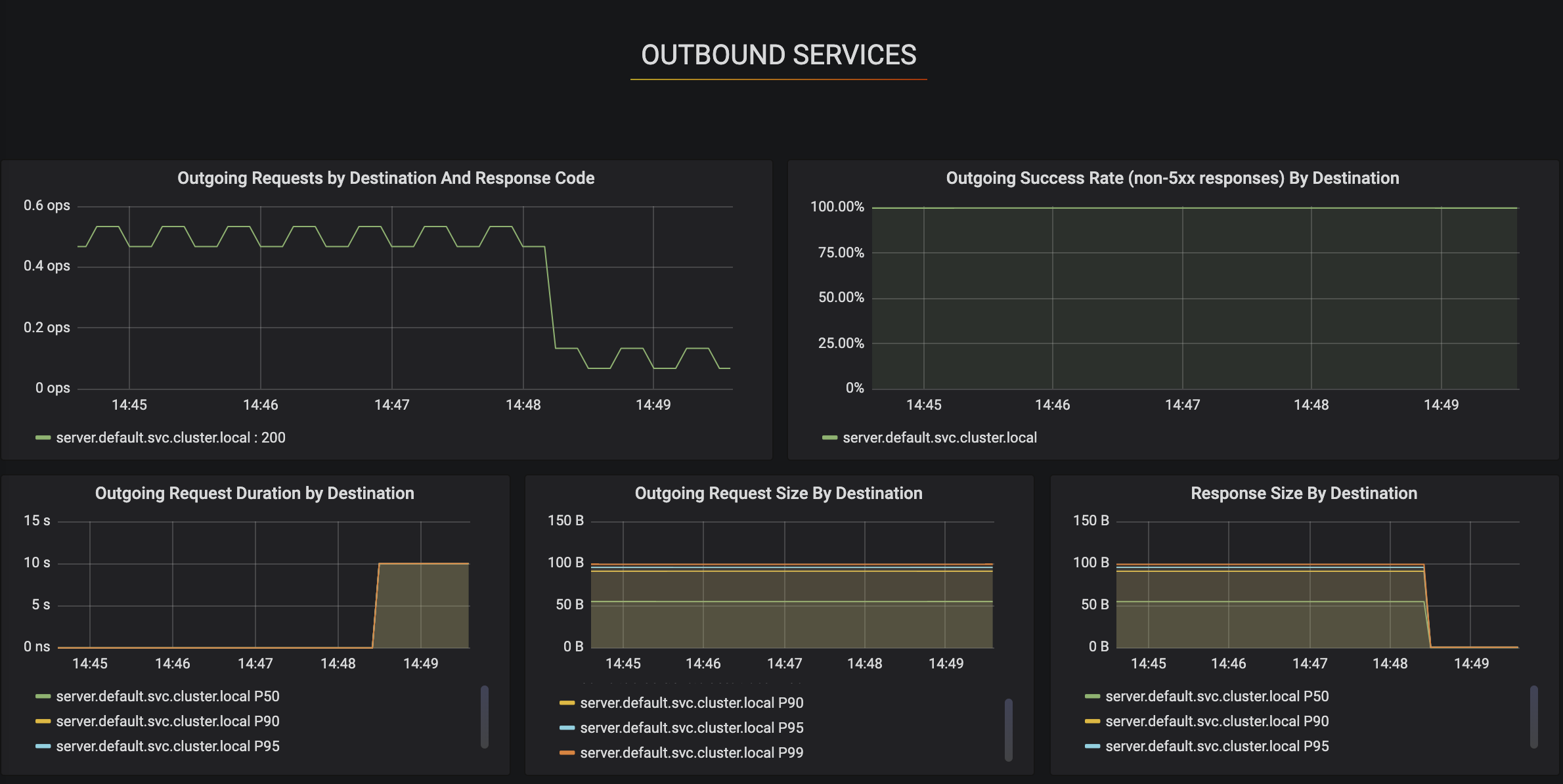

We can also view the server’s gRPC traffic metrics in Grafana:

Then, we can apply an Istio traffic rule to inject a 10-second delay fault into server. You might apply this rule in a chaos testing scenario, to test the resiliency of this application.

apiVersion: networking.istio.io/v1alpha3

kind: VirtualService

metadata:

name: server-fault

spec:

hosts:

- server

http:

- fault:

delay:

percentage:

value: 100.0

fixedDelay: 10s

route:

- destination:

host: server

subset: v1

This causes the client RPC to time out (Outgoing Request Duration):

To learn more about gRPC and Istio: